The Real Barrier to On-Chain AI Agents: Not Model Capability but the Trusted Coordination Layer

Phenomenon: Agent Narratives Are Heating Up, but Implementation Efficiency Isn't Keeping Pace

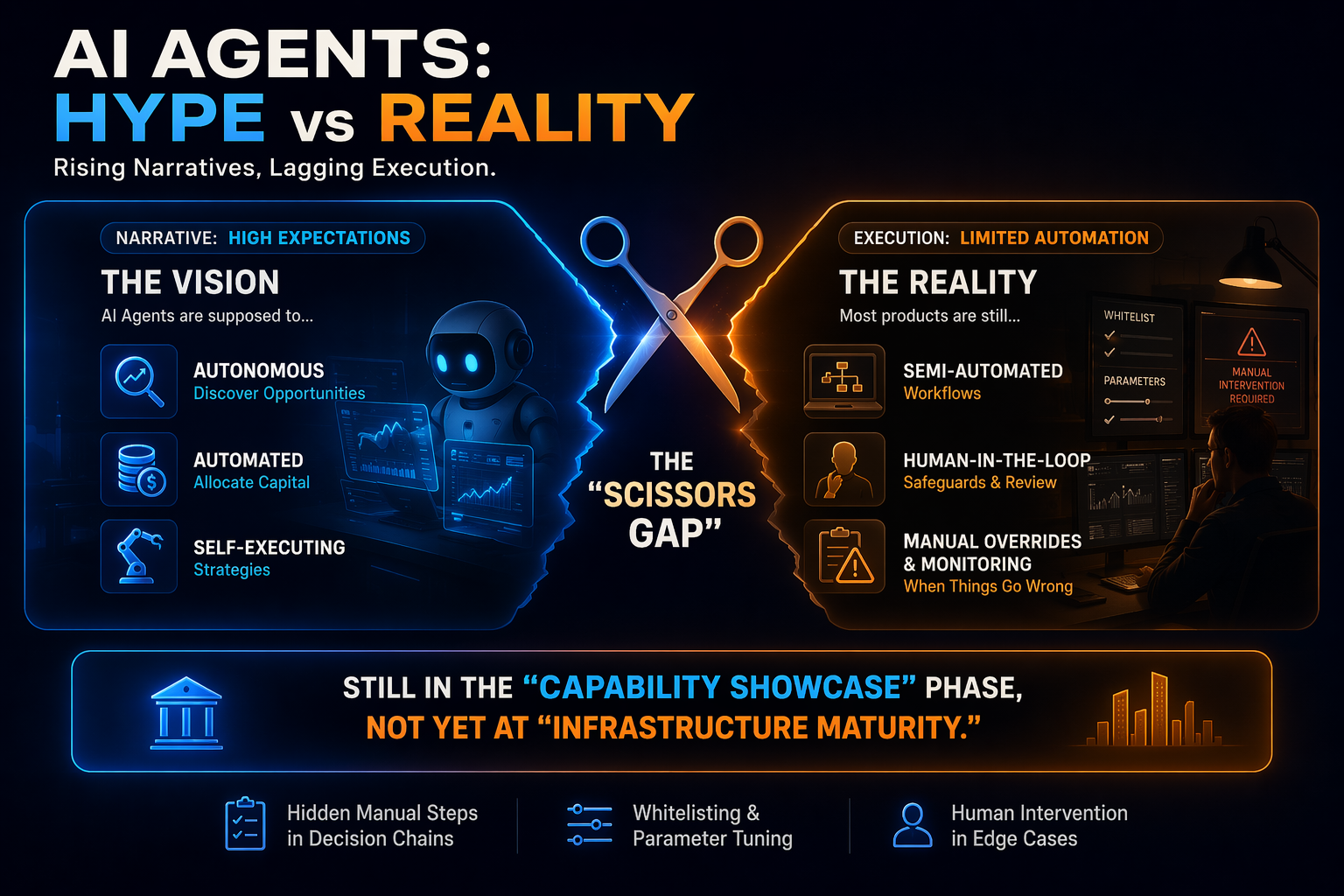

A clear "scissors difference" has emerged in the current market:

-

On the narrative side, agents are expected to "automatically discover opportunities, automatically allocate funds, and automatically execute strategies."

-

On the execution side, most products remain at the stage of "semi-automated workflows with manual fallback."

This indicates the industry is still in a "capability demonstration phase" and has yet to enter the "infrastructure shaping phase."

Many products appear automated, but their core decision-making still relies heavily on manual pre-judgment—such as whitelist filtering, strategy parameter maintenance, and manual intervention during abnormal events.

Misconception: The Core Issue Is Not Model Weakness, but Missing System Coordination

A common explanation for implementation challenges is that "the model isn't smart enough." This only addresses part of the issue. The deeper constraint is that, regardless of how powerful the model is, it still requires a usable operating system.

For on-chain agents to complete a full task, they must clear at least four hurdles:

-

Identify interactive targets;

-

Confirm targets are authentic and trustworthy;

-

Understand the economic significance of those targets;

-

Execute under risk constraints and verify outcomes.

Today's pain point is that on-chain infrastructure offers limited support for the first three steps. In other words, the problem isn't "can it place orders," but "is there a reliable upstream cognition and constraint system."

Four Core Frictions: Discovery, Credit, Data, Execution

Discovery Friction: The Open World Is Vast, but Relevant Opportunities Are Scarce

Permissionless networks allow anyone to deploy contracts. From an agent's perspective, legitimate protocols, testnet contracts, malicious forks, and shell projects are nearly indistinguishable in terms of discoverability. "Seeing a contract" isn't the same as "seeing an opportunity," and certainly not "seeing an executable opportunity."

Traditional quant systems operate within closed sets because strategy boundaries are predefined.

For agents to dynamically discover opportunities at runtime, they must take on the additional cost of "relevance judgment"—the essence of discovery friction.

Credit Friction: On-Chain Addresses Are Verifiable, Economic Identity Is Not

Blockchains can verify signatures and state changes, but cannot verify "is this an official deployment" or "is this token a marketplace-standard asset." In practice, credit judgments rely heavily on frontends, documentation, social reputation, and ecosystem consensus. For humans, this is an experience-based system; for agents, it's a missing field.

As a result, agents face two high-risk scenarios at the credit layer:

-

Interacting with incorrect addresses, counterfeit tokens, or abnormal affiliates;

-

Continuing to operate under outdated assumptions after governance or permission changes.

Such errors in capital systems are not minor discrepancies—they're direct sources of capital loss.

Data Friction: Having Data Doesn't Mean Having Actionable Data

On-chain data is abundant, but economic semantics are not standardized. Even in lending markets, different protocols may use different interface structures, state fields, units, and update frequencies.

For agents to compare across protocols, they must first perform extensive semantic reconstruction:

-

Which field represents actual available liquidity;

-

Which parameter impacts the health factor;

-

Which interest rate reflects realizable return, not just nominal display.

Without a standardized semantic layer, agents expend significant computation and time on "data assembly," resulting in lower decision timeliness and accuracy.

Execution Friction: A Successful Trade Isn't the Same as Completing the Task

A major misconception in on-chain execution is equating "trade on-chain" with "goal achieved." In reality, agent tasks are often multi-step processes:

Approval -> Routing -> Swap -> Deposit -> Rebalance -> Risk Check.

Any slippage, delay, liquidity change, or state drift at any step can cause the final outcome to diverge from the intended goal.

Therefore, what the execution layer truly needs is "strategy constraints and post-execution verification," not just "broadcasting a transaction."

Why Friction Will Be Even More Pronounced in 2026

What makes 2026 unique is that agents are rapidly evolving from "information tools" to "capital executors."

As permissions shift from "read" to "write," the risk shifts from "answering questions incorrectly" to "misallocating funds."

Additionally, three industry trends are amplifying the problem:

-

Multi-chain and cross-chain environments are becoming more complex, with growing interface heterogeneity;

-

Protocol innovation is accelerating, but standardization is lagging behind;

-

Market expectations for agent commercialization are rising, while the tolerance for error is shrinking.

The result: the hotter the narrative, the faster infrastructure shortcomings are exposed.

Which Scenarios Will Arrive First, and Which Remain High-Risk

Scenarios Most Likely to Be Implemented First

-

Fund rebalancing within whitelisted protocols;

-

Treasury management involving single chains, few protocols, and low-frequency trades;

-

Automated payment and settlement tasks with clearly defined objectives and boundaries.

These scenarios share clear environmental boundaries, manageable exception spaces, and well-defined responsibilities.

Scenarios That Remain High-Risk

-

Cross-chain high-frequency arbitrage and dynamic discovery of unfamiliar protocols;

-

Autonomous allocation across the entire market without whitelist constraints;

-

Fully automated strategy switching in high leverage, low liquidity environments.

These scenarios aren't off-limits forever, but at present, the "foundational infrastructure prerequisites" are not yet in place.

A More Realistic Path to Implementation: Constrain First, Expand Later

The most feasible path for on-chain agent adoption isn't immediate full autonomy, but a phased approach.

Stage 1: Trusted Object Layer

First, address "who to interact with":

-

Standardized address registries;

-

Token and protocol authenticity proofs;

-

Real-time monitoring of upgradable contracts and permission changes.

Stage 2: Semantic Data Layer

Next, address "what to understand":

-

Unified economic object models across protocols;

-

Standardized risk parameters;

-

Traceable, low-latency data indexing and snapshots.

Stage 3: Constrained Execution Layer

Then, address "how to act":

-

Intent expression and strategy constraint engines;

-

Multi-step execution orchestration and failure rollbacks;

-

Pre-trade simulation and post-trade outcome verification.

Stage 4: Responsibility and Governance Layer

Finally, address "what to do when things go wrong":

-

Permission grading and circuit breaker mechanisms;

-

Operation auditing and responsibility attribution;

-

Human-machine collaborative takeover procedures.

Only by progressively building out these four layers can agents move from "demonstration" to "trusted delegation."

Conclusion: The Success of On-Chain Agents Depends on Trusted Execution Infrastructure

AI agents are difficult to implement on-chain not because blockchains can't execute or models can't reason, but because there's no industrial-grade integration layer connecting the two.

At this stage, the most important evaluation criteria are not "how much can agents do," but:

-

Can they avoid losing control in abnormal situations;

-

Can they maintain consistent interpretation across multi-protocol environments;

-

Can execution results be mapped back to verifiable targets;

-

Can risk responsibility be assigned to a governable mechanism.

Thus, the next competitive focus will shift from "who tells the best agent story" to "who first completes the trusted execution stack."

On this path, platforms that first enable constrained scenarios and establish stable closed loops will be best positioned to become long-term infrastructure layers. Products that rely on high-autonomy narratives but lack robust risk control and semantic capabilities will continue to face dual bottlenecks in implementation and trust.

Related Articles

Arweave: Capturing Market Opportunity with AO Computer

The Upcoming AO Token: Potentially the Ultimate Solution for On-Chain AI Agents

What is AIXBT by Virtuals? All You Need to Know About AIXBT

AI+Crypto Landscape Explained: 7 Major Tracks & Over 60+ Projects

AI Agents in DeFi: Redefining Crypto as We Know It